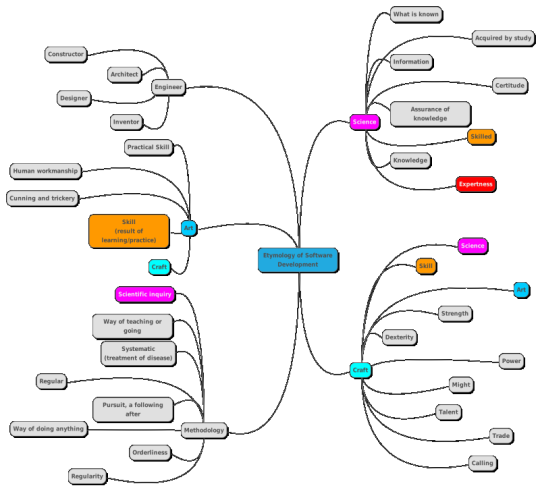

Context - Is Nanotechnology Used In Artificial Intelligence?

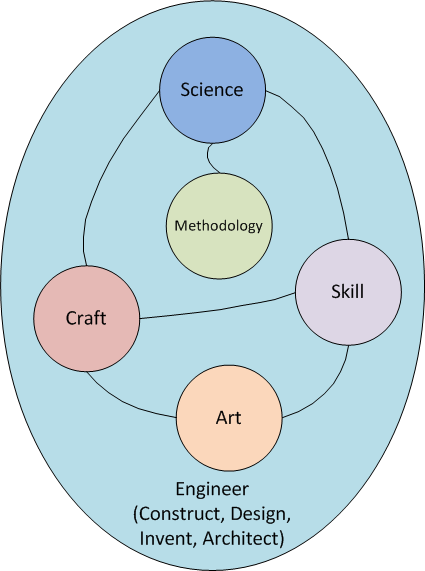

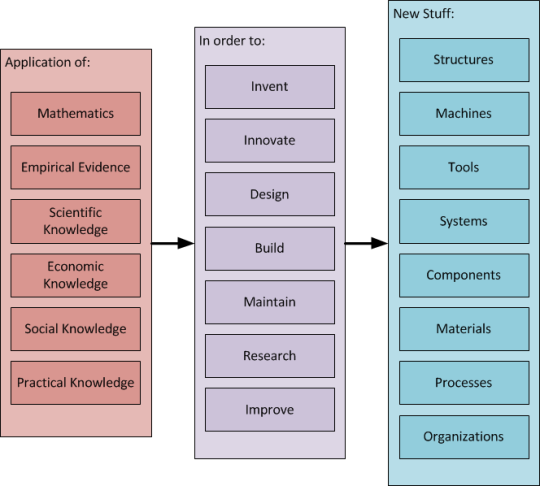

Biotechnology, information technology, and nanotechnology are three technologies that are increasingly reliant on current technical and scientific advancement.

The concept of combining bioscience, artificial intelligence (AI), and nanotechnology will usher in yet another revolution in science and technology, one that has been in the works for more than a decade.

Nonetheless, the anticipated interdisciplinary research integration is still in the works.

- Nanotechnology combines engineering and physical sciences knowledge; it is one of the most significant developing technological sectors, with applications in medical, engineering, and agricultural.

- AI is a method of incorporating human-like reasoning into any technological device. This is a study of how the human brain thinks, learns, chooses, and functions as it tries to solve issues.

For the construction of common and most successful models, such as artificial neural networks (ANNs) and other similar algorithms, it is largely influenced by biological anatomy.

Improved machine functions linked to human intellect, such as reasoning, thinking, and problem solving, is an important AI aim.

- AI is being used in a growing number of disciplines, not just within AI itself, where machine learning, deep learning, and ANNs are increasingly effective approaches in their own right, but also in the number of domains and businesses where it now reigns supreme.

- AI, in conjunction with the Internet of Things (IoT) and other emerging sectors, has already changed many production and monitoring processes across a variety of industries, and the trend is continuing.

Nanotechnology is largely made up of sophisticated systems that aren't always compatible with certain parts of AI.

Nanotechnology, on the other hand, is thought to be a technique that AI will employ to converge to oneness.

Though such a picture may still seem futuristic, existing technology has begun to show signs of a similar harmonization.

- From fast-paced AI-assisted nanotechnology research to generating state-of-the-art materials to expanding the application area of AI utilizing nanotechnology-based computing devices, combining these two technologies may result in significant advances.

- A combined research may not only merge the two technologies, but it can also offer a boost to research in each area, perhaps leading to a slew of new techniques for gathering information and communication technologies.

- Biotechnology, cognitive studies, nanotechnology, robotics, AI, information and communication technology (ICT), and the sciences dealing with such issues are all exposed to larger political and societal debates.

- Meanwhile, AI has been used in nanoscience research for a variety of purposes, including analyzing exploratory procedures and assisting in the creation of novel nanodevices and nanomaterials.

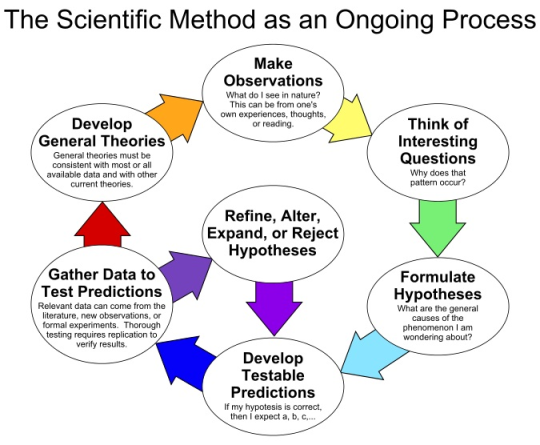

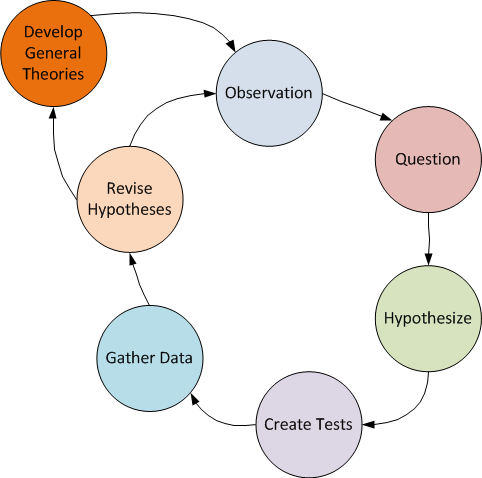

There are various reasons why AI paradigms are used in nanoresearch.

- Nanotechnology is afflicted by the natural limits of size; the governing physical rules are vastly different from those that apply in other situations.

- As a result, one of the flaws that nanotechnology must solve is the right explanation of the consequences obtained from any such system (Ly et al. 2011).

- To make matters worse, the signal is highly influenced by numerous components in many systems.

- In these instances, developing theoretical approximations is difficult, hence simulation approaches have been used to achieve exact elucidations of the investigative outcomes.

Various AI machine learning paradigms may be used to generate research results as well as produce nanoapplications in the future.

- These strategies are particularly useful when dealing with a large number of connected factors at the same time, and they may effectively express and simplify complex/unknown data or functions (Mitchell 1997; Bishop 2006).

- Machine learning methodologies such as ANNs, a collection of weighted linked nodes, and link weights are used to investigate these types of functions, which will be quite valuable, utilizing the monitored or unmonitored algorithm.

Other AI approaches can tackle a variety of optimization and search challenges.

- There are various machine learning approaches that may be used in nanotechnology research for complex categorization, prediction, correlation, data mining, clustering, and other control issues.

- These techniques include decision trees, support vector machines, Bayesian networks, and others.

- A few studies have also been conducted on how AI techniques can take advantage of the computational power boost offered by future nanomaterials developed by nanoscience and used for fabricating nanodevices, and nanocomputing will provide powerful dedicated architectures for applying machine learning techniques.

UTILITY OF ARTIFICIAL INTELLIGENCE.

The following section explores the bidirectional link between AI and nanotechnology via a variety of examples and applications.

AI IN SCANNING PROBE MICROSCOPY

In the nanoworld, scanning probe microscopy (SPM) is the most widely used imaging technology.

- This notion encompasses a variety of methods for obtaining pictures via the interaction of a pattern and a probe.

- The tunneling current between the pattern and the probe is used to characterize the pattern topography via their interaction.

- After the creation of the nanoscope, other strategies were created by altering the contacts between the tip and the sample.

SPM may also be used to manipulate atoms on a smaller scale.

Despite numerous attempts to improve the judgment and the ability to control atoms, there are still challenges with the interpretation of tiny information.

The probe–sample interactions are difficult to understand and are influenced by a variety of factors.

AI solutions might be a lifesaver in resolving such problems.

- In recent years, advances in multimodal SPM imaging for acquiring more complementary information (approximately the pattern) have created a massive quantity of data, making it even more difficult to understand individual sample attributes.

- To address this problem, a technique known as functional identification imaging (FR-SPM) has been developed, which seeks a direct identity of local behaviors detected from spectroscopic responses using neural networks trained on examples supplied by an expert.

- The cellular genetic algorithm (cGA), a Gas subclass, is based entirely on the evolutionary optimization method and is used to automate the imaging operation in SPM using software capable of enhancing the probe's exact state and related control parameters.

- As a result, superior atomic resolution images may be acquired with no human involvement other than the preparation of samples and tips (Huy et al. 2009; Woolley et al. 2011).

- ANNs are widely utilized for categorizing numerous behavioral, structural, and physical aspects of nanomaterials on the nanoscale, which are employed in a wide range of applications, including carbon nanotubes, quantum-dot semiconductor optics and devices, chemical technology, and manufacturing.

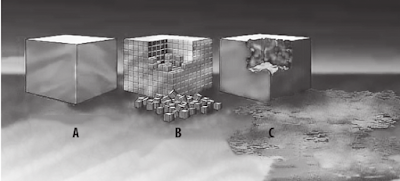

NANOSYSTEMS DESIGN

ANNs have recently been employed to investigate the nonlinear connection between input factors and output responses in the transparent conductive oxide deposition process.

- In optoelectronic devices such as solar cells, organic LEDs, and flat-panel displays, this kind of thin film is now utilized as an electrode (Bhosle et al. 2006).

- Better nanoantenna shapes were also developed through evolutionary optimization, outperforming the best existing radio-wave type of reference antennas.

- The fittest antenna shape among the linear dipole antennas, according to GA, combines the properties of the split-basic ring's magnetic resonance with the electrical one (Feichtner et al. 2012).

- By thoroughly researching the working principles of the generated geometries, this strategy will develop nanoantenna structures for unique uses and appropriately supply novel layout techniques.

- GAs have also been detected in the area of nano-optics.

- A careful design of nanoparticle mild concentrators will have a significant influence on a variety of nanooptics applications, including optical manipulators, solar cells, plasmon-enriched photodetectors, modulators, and nonlinear optical devices.

AI AND NANOSCALE SIMULATION.

One of the primary challenges that scientists encounter while working at the nanoscale is the tool simulation that is being investigated, since genuine optical photographs at the nanoscale are not possible.

At this scale, images must be interpreted, and numerical simulations are sometimes the best method for obtaining an exact scheme of what is there in the picture.

- Nonetheless, they are difficult to use in many situations, and numerous factors must be considered in order to get an acceptable system representation.

- AI can help here by improving simulation performance and making data collection and interpretation easier.

- When functioning at the nanoscale, the use of ANNs in numerical simulations has been shown to be useful in a variety of ways.

- To begin, the software program may be manually modified to maintain the stability of numerical exactness and physical implications.

- Another use of ANNs in simulation software is to reduce the complexity of associated settings (Castellano-Hernández et al. 2012).

AI AND NANO COMPUTING

The combination of AI with existing and emerging nanocomputing technologies yields a wide range of applications (Service 2001; Bourianoff 2003).

- Since the inception of nanocomputers, AI paradigms have been utilized for different degrees of modeling, developing, and building prototypes of nanocomputing devices.

- Machine learning techniques applied to semiconductor-based hardware with the help of nano-hardware may also offer a basis for a new less expensive and portable age of computing that can include high overall performance computing, including programs, sensory data processing, and control activities (Uusitalo et al. 2011; Arlat et al. 2012).

- The highest hopes for nanotechnology-enabled quantum computing and storage are that it will significantly improve our ability to solve the very difficult NP-whole optimization problem.

Such challenges emerge in a variety of situations, but primarily with Big Data, which necessitates "computational intelligence" (Ladd et al. 2010; Maurer et al. 2012).

In this context, natural computing is usually done using several methodologies.

- Apart from various natural computing approaches, techniques such as DNA computing or quantum computing are now being thoroughly investigated (Darehmiraki 2010; Razzazi and Roayaei 2011; Ortlepp et al. 2012; Zha et al. 2013).

- Many variables are used in DNA computing. This is an example of how DNA computing AI methodologies may be used to purchase a final result from a little preliminary data collection, avoiding the need of all possible solutions.

Other approaches to examine are evolutionary and GAs.

Eventually, nanocomputing systems—of which only a handful are bioinspired—will include a broad range of new nanotechnologies.

These technologies will be able to leverage new data versions to apply machine learning paradigms in order to solve complicated issues in a broad range of applications when new physical working bases, reconfigurable architectural storage, and computational methods accumulate.

AI AND NANO TECHNOLOGY APPLICATIONS IN FOOD SCIENCE.

Food science is rapidly evolving in tandem with nanotechnology.

- Nanotechnology is the solution to the food market's desire for technology that is critical to maintaining market leadership within the food processing business in order to generate dependable, appropriate, and tasty fresh food items.

- Preservatives and wrapping are both done using nanoparticles ("nano inside," "nano outside").

- Nanoscale food additives may be utilized to affect product flavor, nutritional content, shelf life, and texture; they may even be used to detect infections; and they may serve as quality indicators.

- Nanotechnology opens up a wide range of possibilities for new product development and food system applications.

- Research & development opportunities for food additives and packaging are aided by AI tactics.

THE USE OF NANOBOTS IN MEDICINE.

It has been demonstrated that a large number of nanosystems are capable of interacting with living neurons.

- Because the detectors are threshold devices similar to spiking neurons, a few CNT features enable us to set down nanotube detectors that could assist implement the pulse-train neural network function (Lee et al. 2003).

- The identification of unstable chemical compounds using CNT-covered acoustic and visual sensors is a promising application of ANN algorithms.

- It is concluded that a first-rate categorization exchange may be realized by combining multiple modules of auditory and optical sensors, which is the state of affairs in which ANNs can fully realize their potential (Penza et al. 2005).

- On the domain of pharmacology and nanomedicine, ANNs have been deemed a well-known device for nanoparticle training analysis and modeling, with a high ability effect in chronic disease (Zarogoulidis et al. 2012).

Nanobots developed by researchers at the University of California, San Diego (UCSD) are capable of purifying the blood of toxins produced by bacteria.

These nanobots are roughly a quarter of the width of a human hair and can move 35 meters per second by "swimming" through the blood while being propelled by ultrasound.

- Nanobots developed by MIT researchers in 2018 are so small and light that they might float through the air.

- Linking 2D electronic additives to minute particles measuring between one billionth and one millionth of a meter may make this nanotechnology feasible.

The ultimate product is a robot that is about the size of an ovum or a grain of sand.

- The combination of photodiode semiconductors, which can detect radiation from an optical region and convert it to an electrical signal, allows for a constant supply of power to the environmental sensors installed in these robots.

- The modest electrical fee created is sufficient to allow this technology to function without the need of a battery.

When it comes to the value of these nanobots, the researchers want to send them on missions to faraway regions to expose things like pipelines and the human digestive system.

This minuscule emissary may be released into the opening, allowed to flow in the pipe's direction, and then retrieved at the pipe's exit.

The data acquired by its sensors, which include the spatiotemporal attention of positive chemical compounds such as hormones and enzymes, may then be downloaded and considered once harvested.

Conclusion.

Many difficulties that arise in the research of nanotechnology might be solved using AI.

- The usage of ANNs and GAs has been investigated in a variety of scenarios, ranging from data interpretation in a microscope scanning probe to the characterization and classification of nanoscale fabric characteristics.

- It has also looked at numerous initiatives to build nano-machines and utilize them to implement cutting-edge synthetic intelligence paradigms.

- These ground-breaking initiatives call for a true confluence of nanotechnology and artificial intelligence in high-performance computer systems enabled by biomaterial-based nanocomputing devices.

Finally, the considerable capacity effect on the usage of AI techniques has been shown.

- Nanotechnology, on the other hand, has been applied in biomedical research, therapeutic applications, and food science.

- Nanotechnology focuses on bottom-up design, while AI research usually takes a top-down approach to solving problems.

- The merging of those areas will create approaches for a variety of complex problems that need several layers of explanation and relationships.

- As previously said, nanotechnology and artificial intelligence (AI) may assist in this attempt to revive.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

You May Also Want To Read More About Nano Technology here.

REFERENCES

Arlat, Jean, Zbigniew Kalbarczyk, and Takashi Nanya. “Nanocomputing: Small devices, large dependability challenges.” IEEE Security & Privacy 10, no. 1 (2012): 69–72.

Bhosle, V., A. Tiwari, and J. Narayan. “Metallic conductivity and metal-semiconductor transition in Ga-doped ZnO.” Applied Physics Letters 88, no. 3 (2006): 032106.

Bishop, Christopher M. Pattern Recognition and Machine Learning. Berlin: Springer (2006).

Bourianoff, George. “The future of nanocomputing.” Computer 36, no. 8 (2003): 44–53.

Castellano-Hernández, Elena, Francisco B. Rodríguez, Eduardo Serrano, Pablo Varona, and Gomez Monivas Sacha. “The use of artificial neural networks in electrostatic force microscopy.” Nanoscale Research Letters 7, no. 1 (2012): 1–6.

Darehmiraki, Majid. “A semi-general method to solve the combinatorial optimization problems based on nanocomputing.” International Journal of Nanoscience 9, no. 5 (2010): 391–398.

Feichtner, Thorsten, Oleg Selig, Markus Kiunke, and Bert Hecht. “Evolutionary optimization of optical antennas.” Physical Review Letters 109, no. 12 (2012): 127701.

Huy, Nguyen Quang, Ong Yew Soon, Lim Meng Hiot, and Natalio Krasnogor. “Adaptive cellular memetic algorithms.” Evolutionary Computation 17, no. 2 (2009): 231–256.

Ladd, Thaddeus D., Fedor Jelezko, Raymond Laflamme, Yasunobu Nakamura, Christopher

Monroe, and Jeremy Lloyd O’Brien. “Quantum computers.” Nature 464, no. 7285 (2010): 45–53.

Lee, Ian Y., Xiaolei Liu, Bart Kosko, and Chongwu Zhou. “Nanosignal processing: Stochastic resonance in carbon nanotubes that detect subthreshold signals.” Nano Letters 3, no. 12 (2003): 1683–1686.

Ly, Dung Q., Leonid Paramonov, Calvin Davidson, Jeremy Ramsden, Helen Wright, Nick Holliman, Jerry Hagon, Malcolm Heggie, and Charalampos Makatsoris. “The matter compiler-towards atomically precise engineering and manufacture.” Nanotechnology Perceptions 7, no. 3 (2011): 199–217.

Maurer, P.C., G. Kucsko, C. Latta, L. Jiang, N.Y. Yao, S.D. Bennett, F. Pastawski, D. Hunger, N. Chisholm, M. Markham, and D.J. Twitchen. “Room-temperature quantum bit memory exceeding one second.” Science 336, no. 6086 (2012): 1283–1286.

Mitchell, Tom M. Machine Learning. Maidenhead: McGraw Hill (1997).

Ortlepp, Thomas, Stephen R. Whiteley, Lizhen Zheng, Xiaofan Meng, and Theodore Van Duzer. “High-speed hybrid superconductor-to-semiconductor interface circuit with ultra-low power consumption.” IEEE Transactions on Applied Superconductivity 23, no. 3 (2012): 1400104.

Penza, M., G. Cassano, P. Aversa, A. Cusano, A. Cutolo, M. Giordano, and L. Nicolais. “Carbon nanotube acoustic and optical sensors for volatile organic compound detection.” Nanotechnology 16, no. 11 (2005): 2536.

Razzazi, Mohammadreza, and Mehdy Roayaei. “Using sticker model of DNA computing to solve domatic partition, kernel and induced path problems.” Information Sciences 181, no. 17 (2011): 3581–3600.

Service, Robert F. “Nanocomputing. Assembling nanocircuits from the bottom up.” Science 293, no. 5531 (2001): 782.

Uusitalo, Mikko A., Jaakko Peltonen, and Tapani Ryhänen. “Machine learning: How it can help nanocomputing.” Journal of Computational and Theoretical Nanoscience 8, no. 8 (2011): 1347–1363.

Woolley, Richard A. J., Julian Stirling, Adrian Radocea, Natalio Krasnogor, and Philip Moriarty. “Automated probe microscopy via evolutionary optimization at the atomic scale.” Applied Physics Letters 98, no. 25 (2011): 253104.

Zarogoulidis, Paul, Ekaterini Chatzaki, Konstantinos Porpodis, Kalliopi Domvri, Wolfgang Hohenforst-Schmidt, Eugene P. Goldberg, Nikos Karamanos, and Konstantinos Zarogoulidis. “Inhaled chemotherapy in lung cancer: Future concept of nanomedicine.” International Journal of Nanomedicine 7 (2012): 1551.

Zha, Xinwei, Chenzhi Yuan, and Yanpeng Zhang. “Generalized criterion for a maximally multi-qubit entangled state.” Laser Physics Letters 10, no. 4 (2013): 045201.